The concept of virtual patching has set me off on a small rant.

If you’re not familiar, the concept is something like this: vulnerability scanners determine that PC42 in the CritStuff system has a nasty problem, but you can’t patch it for reasons. So instead, software magically figures out that exploiting this vulnerability requires access to port 80, and acts to block anything headed to PC42’s port 80.

I’m down on two concepts here: the first is high risk automation. I have scars from network admission control. I’ve seen SEC filings delayed because of a properly quarantined laptop, never mind the attack ships on fire off the shoulder of Orion. Blindly implemented policy has high risk, and some knowledge of context is needed to make a proper risk-reward calculation. People aren’t perfect, but they’re better at this than software is.

The second concept I don’t trust is a requirement for pre-learning. Anything that requires the customer to learn in great detail how their systems work and what the dependencies are before they can safely act has put too much burden on the customer. Anyone remember host-based intrusion prevention systems? How about application virtualization? The environments that are simple enough to manage this way do not have sufficient resources attached to support a software vendor. Said differently, this approach has failed to find market traction enough times that it is now available for free as open source (though you can certainly find some vendors selling support and integration).

One is supposed to argue that the virtual patching tool, like learning mode IPS before it, is able to save the customer the trouble of learning… except using those automatic tools just leads to learning about the dependency by accident instead, and therefore is still a market fail.

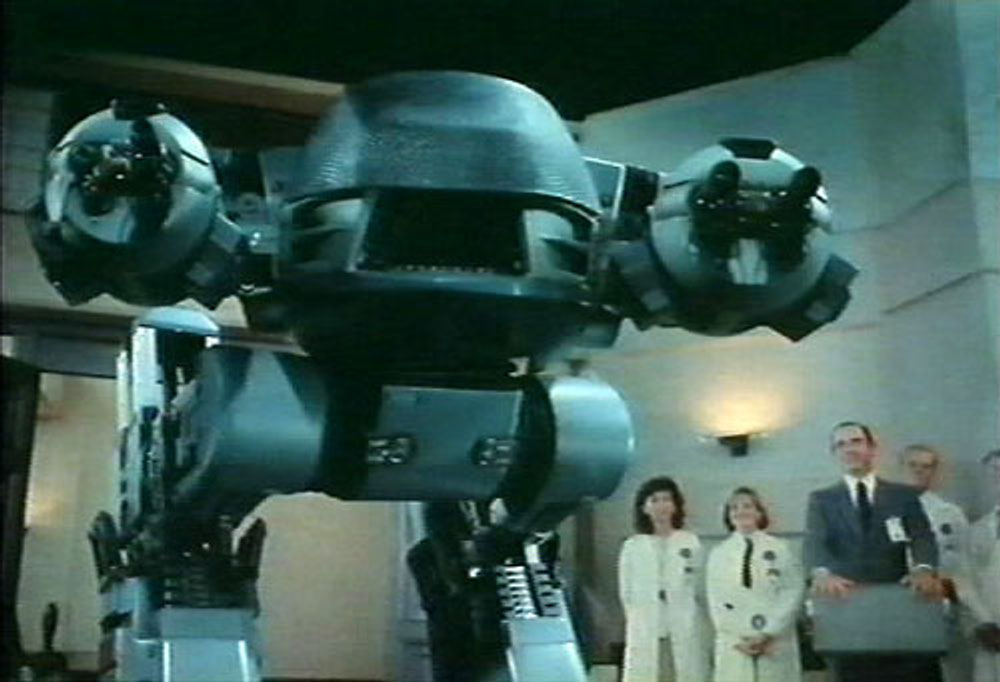

What about Artificial Intelligence? What about it? A perfect robot would be more patient than a human but just as capable of learning the entire system, automating it, and maintaining the automation. But using that system would require the humans around it to either understand as well, or take a leap of faith. People will totally take that leap in order to gratify our laziness, but two or three failures will mean the system is rejected. Can the robot be perfect? If not, can it be cheaper than a human? And is any of this conversation relevant to the far-from-perfect robots we can actually build today? Sometimes.

Update: You might be thinking of reducing risk by not blocking the negative activity, just alerting the SOC… Lorin Hochstein takes on the challenge of deciding to notify.